Student by enrollment, researcher by curiosity, occasional casualty of exam season

Saurab Mishra

I started ML for fun and ended up deep in research papers, failed runs, and exam week chaos.

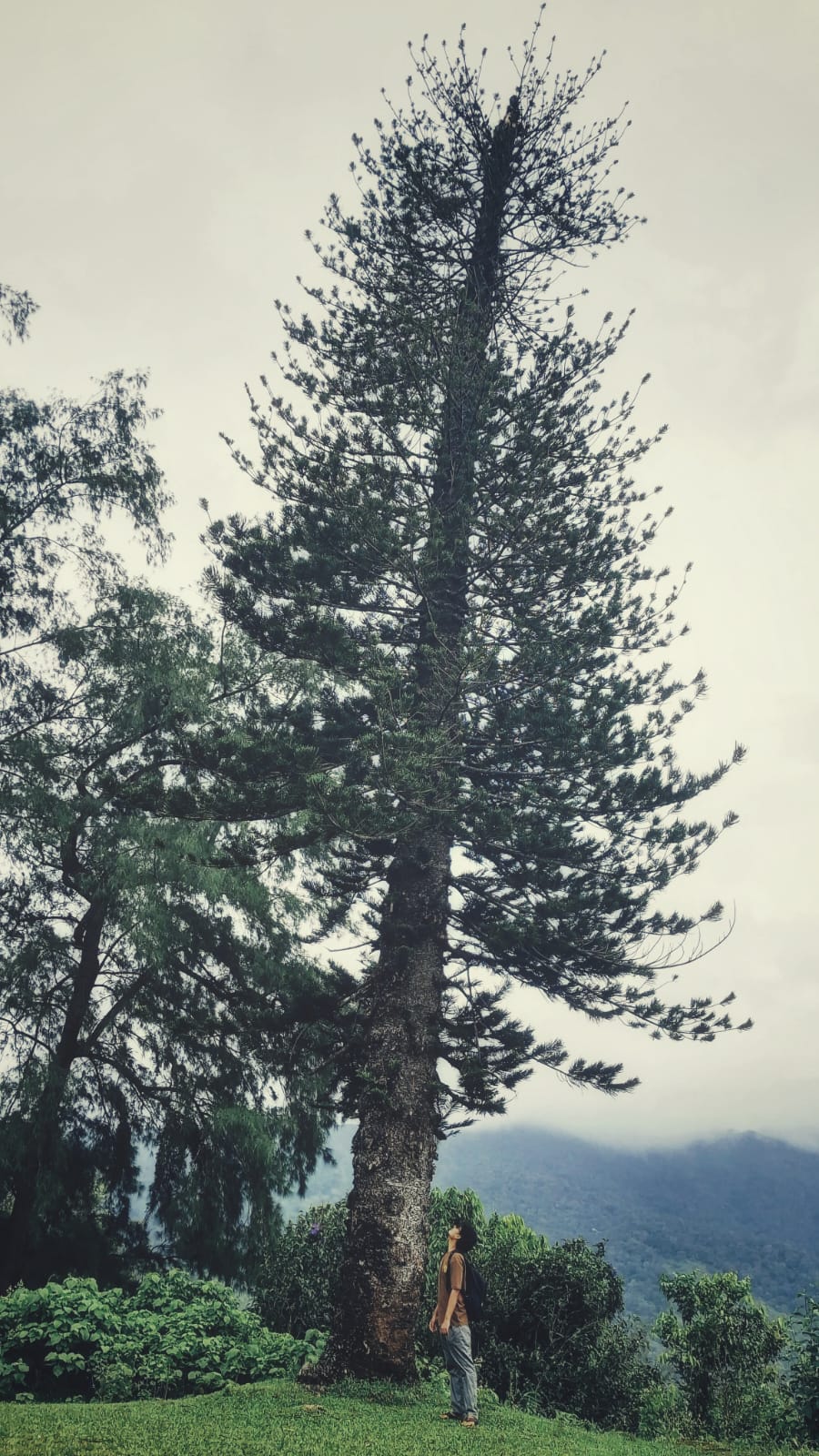

Professional research posture. Unprofessional internal monologue.

My interests want LLMs, systems, and building cool things. My course list wants abstract math proofs, surprise exam patterns, and emotional damage. Every semester feels like two different operating systems fighting for the same RAM, and I am the unlucky process manager asking: why is this theorem in my AI timeline right before finals?